AI vs Manual Follow-Up: Which Closes More Deals?

AI-assisted follow-up outperforms fully manual follow-up on speed and consistency — but the best close rates come from a hybrid approach where AI drafts and a human reviews before sending. The debate over automated vs manual email misses the real question: not whether to use AI, but where in the follow-up process it belongs.

Here's what the research actually shows, and what it means for how you handle post-meeting outreach.

Why Speed Is the Whole Ballgame

This part isn't debatable. A study published in the Harvard Business Review found that reps who responded to inbound leads within an hour were 7x more likely to qualify the prospect than those who waited even 60 minutes. For post-meeting follow-up, the dynamic is similar — the mental window where your call is still top-of-mind for a buyer is short.

Industry data from Velocify puts it more bluntly: the odds of contacting a lead decrease by over 10x within the first hour. After 24 hours, you're fighting inertia, competing with three other vendors they've since talked to, and hoping they even remember what you covered.

Manual follow-up loses this race by default. Not because reps are lazy — because they're busy. Back-to-back calls, CRM updates, internal Slack threads. The follow-up email that should take 20 minutes ends up taking two hours, or it doesn't happen at all.

AI-assisted drafting closes that gap. Transcript in, draft out in under a minute. The rep still reviews, edits, and sends — but the blank-page problem is gone.

What "Automated" Actually Means (And Where It Goes Wrong)

This is where the AI vs manual sales follow-up comparison gets muddier. "Automated" covers a wide spectrum:

- Fully automated (no human review): System fires a templated email the moment a meeting ends. Fast. Impersonal. Often flagged as spam or ignored.

- AI-drafted, human-reviewed: AI generates a personalized draft from the transcript. Human reads it, tweaks it, sends it. Fast and personal.

- Manual (fully human-written): Rep writes from scratch after the call. High quality when it happens. Happens less often than it should.

The data on fully automated, no-review sequences is mixed. Gartner research on B2B buyer preferences consistently shows that buyers are getting better at detecting templated outreach — and they're deleting it faster. A follow-up that references nothing specific from your conversation signals that you weren't really listening.

That's the failure mode of pure automation: it's fast but it's generic, and generic doesn't close deals.

The failure mode of pure manual: it's personal but it's slow, and slow doesn't close deals either.

The hybrid — AI drafts from your actual transcript, you review and send — is where the results stack up. You get the speed of automation with the specificity of something that sounds like you wrote it, because you actually reviewed it and it references the real conversation.

ReplySequence does this automatically — paste any transcript, get a branded follow-up sequence back in 60 seconds.

The Personalization Problem With Pure Automation

Here's the knock against automated vs manual email that never goes away: personalization at scale is hard. Most automated follow-up tools work from templates. They can merge in a first name and a company name, but they can't reference that the prospect mentioned their Q3 budget freeze, or that they're evaluating two other vendors, or that they need sign-off from someone in legal before moving forward.

That context lives in the transcript. If your follow-up tool isn't reading the transcript, it's not actually personalized — it's just mail merge with extra steps.

This is the distinction that matters in the AI sales results conversation. The question isn't "AI or human?" — it's "does the AI have access to the actual content of the conversation?"

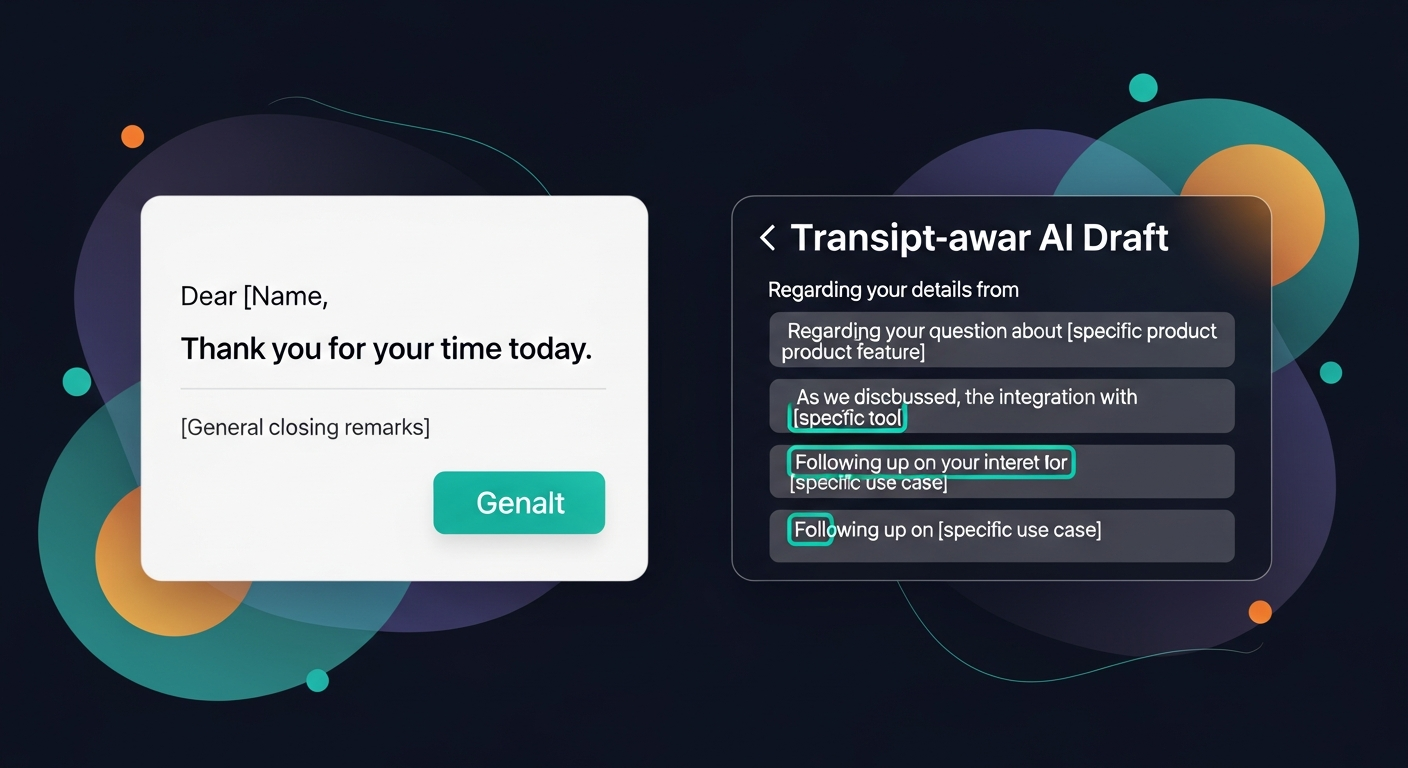

A recruiter wrapping up a candidate screen, a solo founder coming off a discovery call, an AE finishing a demo with a 6-person buying committee — all three have completely different conversations with completely different next steps. A template can't capture that. A transcript-aware AI draft can get you 80% of the way there in under a minute, and your edits get you the rest.

What "Voice-Fingerprint" Changes

One of the real objections to AI-drafted follow-up: it sounds like AI. That's a legitimate problem. Recipients notice. It undermines trust.

The answer isn't to avoid AI — it's to use a system that learns from your edits. When a rep consistently changes "I hope this email finds you well" to "quick note after our call," or removes certain phrases, or always adds a specific sign-off — a voice-fingerprint system captures those patterns and applies them to future drafts. Over time, the drafts start sounding like the rep, not like GPT defaults.

That's the difference between a tool that generates generic output and one that actually improves with use.

Follow-Up Comparison: Where Each Approach Wins

Let me break this down without pretending there's a clean winner across every scenario.

Manual follow-up wins when:

- The deal is high-stakes and the buyer relationship is deeply personal

- You have the time to write something genuinely thoughtful and specific

- The prospect explicitly asked for something highly customized

AI-assisted (draft + human review) wins when:

- You're running 4+ calls a day and the follow-up queue is backing up

- The transcript contains specific commitments, objections, and next steps that need to be captured accurately

- Consistency across a multi-touch sequence matters (touch 1, touch 3, touch 5 — not just the first email)

- You're on a team where follow-up quality varies rep to rep

Fully automated (no review) loses when:

- The buyer can tell it's templated (which is increasingly often)

- The email doesn't reference anything from the actual conversation

- The sequence fires regardless of what happened on the call — even if the deal is dead

For most SDRs, AEs, and founders running their own sales, AI-assisted draft-first is the practical answer. It's not "set it and forget it" — and it shouldn't be. Draft-first means you stay in control. The AI handles the blank page, you handle the judgment.

The Sequence Problem No One Talks About

Most of the AI vs manual sales follow-up debate focuses on the first email. But closing deals usually requires multiple touches — a follow-up to the follow-up, a check-in after they've had time to review the proposal, a nudge when the thread goes cold.

Manual sequences require willpower and systems. Most reps don't have both at once. Tools like HubSpot Sequences solve this, but they come bundled with HubSpot Sales Hub Pro — $90+/seat/month at minimum — which prices out a lot of smaller teams who just need sequences, not an entire CRM platform.

That's the gap: most teams need post-meeting sequences without the enterprise CRM tax.

What the Research Actually Says About AI Sales Results

A few data points worth anchoring to:

- McKinsey's 2023 State of AI report found that sales and marketing functions see some of the highest ROI from AI adoption — specifically in tasks like content generation and personalization at scale.

- Salesforce's State of Sales report (2024 edition) found that high-performing sales teams are 1.9x more likely to use AI tools than underperformers — but the edge comes from AI augmenting human judgment, not replacing it.

- InsideSales.com research (now XANT) found that 50% of deals go to the vendor who responds first — speed of follow-up is a competitive advantage that compounds over a sales cycle.

None of that research says "replace your reps with automation." All of it points toward the same conclusion: AI handles the speed and consistency problem, humans handle the judgment and relationship problem.

The Verdict

AI vs manual sales follow-up isn't a binary choice — it's a spectrum, and the right answer depends on where you're losing deals. If deals are dying in the 48 hours after a good meeting, the problem is almost never the quality of manual writing — it's the delay. Speed beats polish when the buyer's attention is at its peak.

The hybrid approach — transcript-aware AI drafts, human review, then send — consistently outperforms both extremes. Faster than fully manual, more personal than fully automated. That's the follow-up comparison that actually matters.

The meeting went great. Don't let the follow-up be where the deal dies.

—-

Start free at replysequence.com — 10 drafts/month, no credit card required. If you're running more than a few calls a week, the Pro plan is $29/mo and includes unlimited drafts, voice-fingerprint, and multi-touch sequences.

Related reading

- Sales Follow-Up Automation: Balancing AI Efficiency with Human Touch

- How to Trust AI-Generated Follow-Up Emails (And When to Edit Them)

- Can AI Write Better Follow-Up Emails Than a Human?

Get the weekly ReplySequence newsletter for more post-meeting follow-up tactics — subscribe at replysequence.com/newsletter.

How ReplySequence handles this

ReplySequence takes any meeting transcript — paste it in from Zoom, Teams, Meet, WebEx, Fireflies, Granola, or wherever — and drafts a context-rich follow-up email in about 8 seconds. You review it, make any edits, and approve. Deal intelligence builds automatically.